AI Coding Tools Are Only as Good as Your Engineering Foundation

A fintech team rolled out Copilot in Month 1. Velocity spiked 28% in weeks 2-4. Developers raved. Management celebrated. Then in week 5, regressions tanked user confidence. A subtle state machine bug slipped through. An off-by-one in a payment reconciliation loop. Test coverage was 41%. Good enough for traditional code review, but not for AI-amplified development. They disabled Copilot. Called it a failed experiment. This isn't an outlier.

This is the predictable outcome of bolting AI onto weak engineering practices.

AI coding tools aren't a productivity multiplier. They amplify whatever engineering culture is already in place. They accelerate existing practices, exposing gaps hidden by manual review. If your test coverage is 41%, AI will write code that passes your tests and breaks in production. If your PRs are vague, AI will write code that ships fast and breaks at runtime. The faster you go, the more expensive those gaps become.

The teams seeing the biggest wins aren't the ones with the best AI tools. They're the ones with the best engineering foundations.

The Adoption Progression

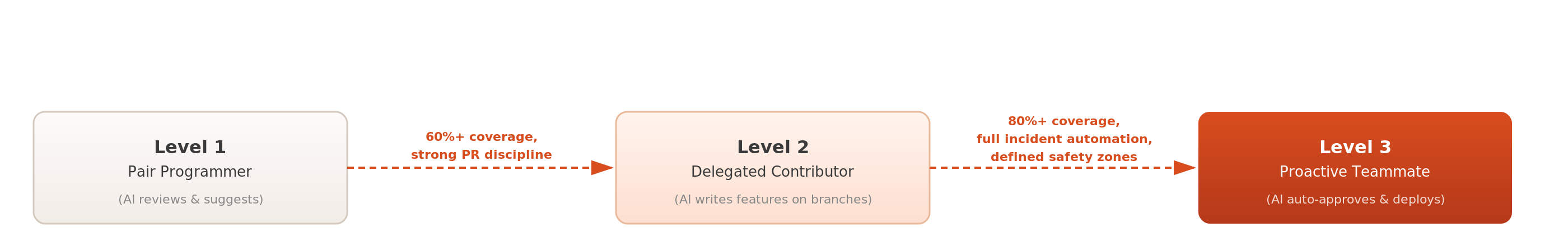

There are three distinct adoption levels, and they require different engineering foundations.

Level 1, Pair Programmer: AI suggests code. You review every suggestion. AI catches issues in autocomplete and comment parsing. Requires baseline test coverage (35%+) and clear PR context. Impact: 10-15% velocity gain. Risk: low. Typical adoption: weeks 1-4.

Level 2, Delegated Contributor: AI writes full features on feature branches. You run the test suite and review the diff. Requires strong test coverage (60%+), scoped tickets, and a runbook for rollback. Impact: 25-35% velocity gain. Risk: moderate. Typical adoption: weeks 5-8.

Level 3, Proactive Teammate: AI self-approves and deploys to scoped domains (e.g., "dashboard refactoring," "payment reconciliation validation"). Requires strong test coverage (80%+), full incident automation, and explicit AI-safe code zones. Impact: 40-50% velocity gain. Risk: high if foundations are weak. Typical adoption: weeks 9+.

Most teams should spend 4-6 weeks at Level 1 before advancing. Teams that rush to Level 2 without the diagnostic work end up like the fintech team.

The Foundation Diagnostic

Now that you know the levels, here's what your engineering foundation needs to look like before you can safely operate at each one. Each column shows the minimum threshold required. If you're below the line for your target level, close the gaps first.

The cost of moving fast with weak foundations compounds with every AI-assisted commit.

The Regression Testing Blind Spot

AI writes code that passes your tests. That's the problem.

Consider this pattern: a state machine managing financial transactions (the logic that tracks whether a payment is pending, authorized, captured, or refunded). The AI sees the happy path in your test suite (state A → state B → state C). It writes clean code that handles these states perfectly. But what happens when a transaction enters state B, gets interrupted, and needs to recover? Your tests didn't cover that edge case. AI didn't know to look for it.

The AI can pass 95% of unit tests while introducing subtle failures that only surface under load, in production, with real concurrency patterns.

Detection method: Before delegating to AI, run your test suite through a mutation testing framework (PIT, Stryker). If coverage is high but mutation score is low, your tests are brittle. The AI will exploit those gaps at scale.

Real example: A financial reconciliation service I built with AI assistance passed all unit tests, all integration tests, and even made it through initial production deployment. In week 2, under 1000x load, a race condition emerged in the reconciliation loop. The AI had written correct code for the synchronous test path but hadn't considered the async failure mode we'd tested manually.

Automation caught it before it reached customers. But the point: good test coverage isn't enough. Tests need to constrain the AI's hypothesis space.

Code Categories That Demand Heavy Human Review

Some code categories require thorough human-in-the-loop review whether AI is involved or not. The margin for error is too low and the testing perimeter is too large to rely on any single review mechanism, human or AI.

- Cryptographic code. Off-by-one in padding? Invisible in tests. Production disaster.

- State machines with side effects. Particularly in financial, medical, or compliance domains. Edge cases compound across states.

- Resource-constrained code. Memory allocation, garbage collection tuning, tight loops. AI optimizes for readability first, performance second.

- Complex numerical code. Floating-point arithmetic, rounding edge cases, large number handling. The math is domain-specific.

- Security-critical code. Input validation, auth boundaries, privilege checks. These are judgment calls more than code generation.

As your foundation strengthens and your observability improves, AI can take on more of the heavy lifting in these areas. But the teams I've seen move fastest are the ones that have explicit categories for what level of human review each part of the codebase requires, with or without AI in the loop.

The Playbook

A realistic adoption timeline is 8 to 12 weeks for most teams. Here's how it breaks down.

Weeks 1-2, Diagnostic & Baseline

- Run test coverage and mutation analysis

- Audit 10 recent PRs: clarity, scope, acceptance criteria

- Map code zones (AI-safe, AI-unsafe, TBD)

- Close the highest-priority foundation gaps before introducing any AI tooling

- Artifact: Foundation Gap Report, Coverage Heatmap, Safety Zone Document

Weeks 3-5, Pair Programmer Validation

- Deploy AI (Copilot, Cursor, Claude Code) in autocomplete mode only

- Require explicit review for every suggestion

- Log all suggestions that passed review but could have caused issues

- Give the team time to build intuition for where AI helps and where it hallucinates

- Artifact: Defect Log, Velocity Report (should show 8-12% improvement, not 30%)

Weeks 6-8, Evaluation Harness

- Build regression test suite targeting the edge cases AI generates most often

- Run mutation testing on AI-generated code vs. human code

- Create evaluation dataset of "AI suggestions we rejected and why"

- Artifact: Evaluation Framework, Regression Test Suite, Exclusion List

Weeks 9-12, First Delegated Features

- Pick a non-critical feature (dashboard UI, internal tool, documentation generation)

- AI writes full feature on a branch

- Run full test suite, mutation analysis, and staged rollout

- Measure: defect rate, velocity, time to production

- Expand delegation scope based on results

- Artifact: Feature Velocity Report, Incident Log, Process Playbook for next delegation

The Cost/Velocity Tradeoff

Here's the uncomfortable part: shipping fast with weak foundations is sometimes legitimate. But only for non-critical paths.

- MVP in non-sensitive domain (dashboards, internal tools). Strong case for skipping to Level 2 early. Failure is forgivable, learning is fast. Cost: rework, support burden.

- Payments, healthcare, compliance. There is no shortcut. Level 1 foundation work is non-negotiable. Cost: 6 weeks baseline work. Benefit: 6-month production confidence.

- State machine or crypto code. Accept that AI remains at pair-programmer level indefinitely. Cost: lower velocity in specific domains. Benefit: you sleep at night.

The fintech team could have shipped faster, but they chose to move fast without building the foundation. By week 5, they'd paid for it in downtime and regression risk that far exceeded the velocity gains.

What This Means

The bottleneck isn't the AI. It's your ability to integrate it without breaking production. Teams that diagnose their foundation, fix the gaps in order, and then unlock AI adoption level-by-level are the ones shipping in weeks. Teams that bolts AI onto broken practices and hope for the best end up disabled and demoralized.

The engineering still matters. You need to architect properly and know when AI is wrong. But with the right foundation, you can move incredibly fast.

Where does your organization stand?

- Can you run mutation testing on your codebase today? If not, your test coverage metrics are overstated.

- Do your PRs include acceptance criteria and scoped domains, or are they feature-level and broad?

- Do you have automated rollback and incident response? If manual, you're not ready for Level 2.

- Can you name the code zones where AI is unsafe in your codebase? If not, you haven't done the diagnostic work yet.